Typing on tablets and smartphones is inconvenient. Not only because of missing tactile feedback, but also because of its size, taking such a precious real estate of your screen, because of tiny letters in small form factor devices, because of required visual feedback and because it is hard is to blind-type. Long story short, I tried to come up with something that would solve those problems.

I took several characteristics to work on:

- Ergonomic Domain

- Memory Domain

- Form factor adjustments

- Visual-motoric latency

- Precision sensitivity

- Error avoidance and correction

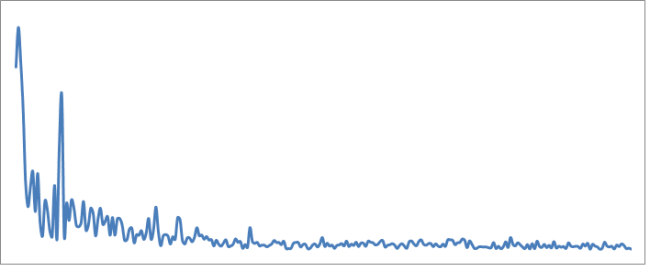

Letter appearance frequency (Eng):

…and came up with a “Distributed Typing” concept

When standard keyboards take half a screen, got far and static keys, does not leverage the fact that “there is no spoon” any more (physical keyboard)…

Distributed Keyboard is enabled on typing, but except for rising keyboard nothing is happening, unless you put finger or several fingers anywhere on the screen. once you put finger(s), a set of letters appear around the spot and all that is required to type it is to “slide” towards the direction (not essentially far).

It might look something like: